Unit 14: Developer Role in Human Oversight

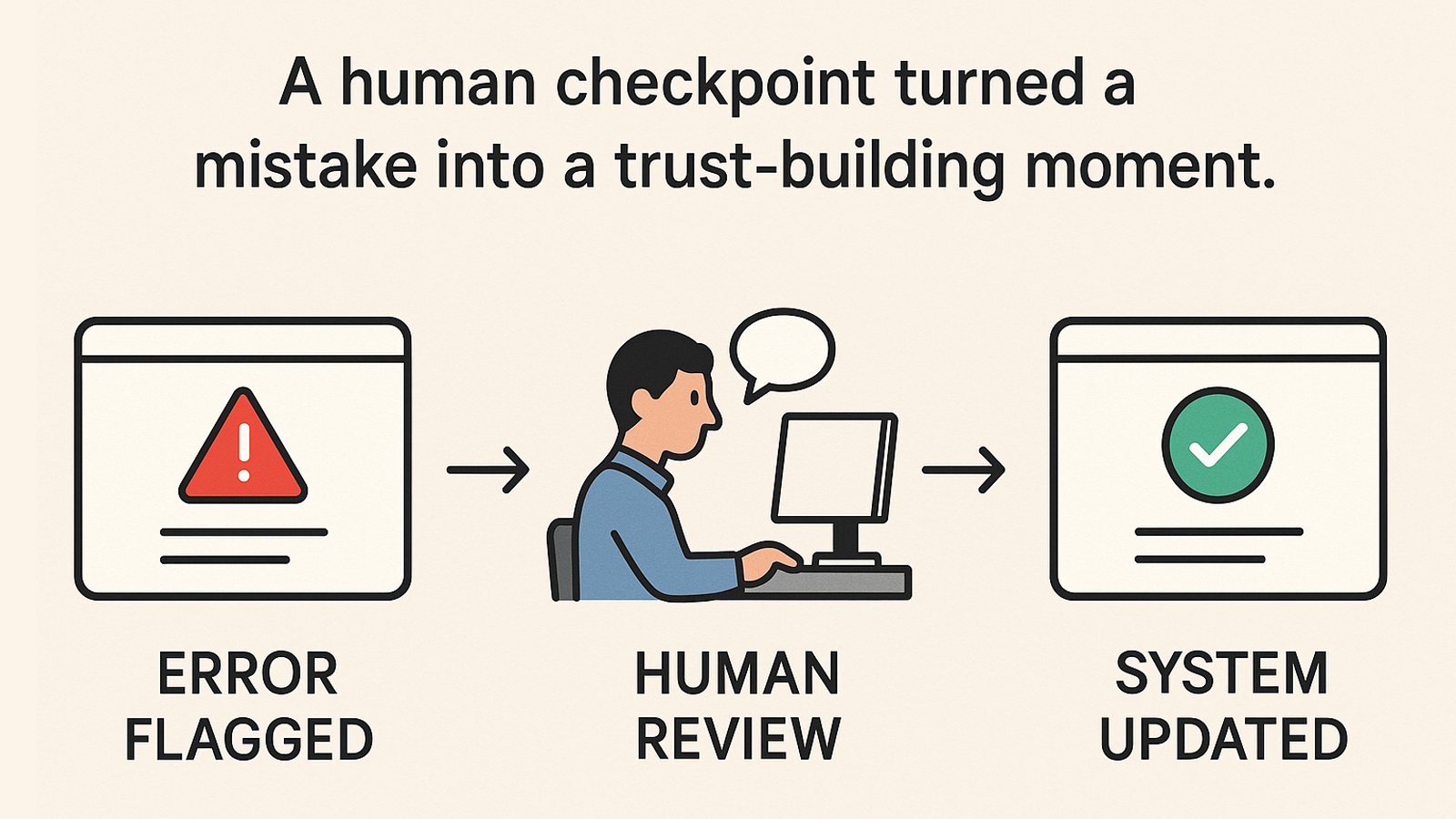

Scenario: A healthcare chatbot begins suggesting incorrect medication advice due to outdated training data.

What went wrong:

No HITL approval for final recommendations

No escalation channel or logging mechanism

No one was assigned oversight ownership

Fix:

Added a pharmacist validation step before advice is shown

Created a feedback form linked to an error escalation queue

Assigned a medical lead as system steward

📌 Result: Improved safety, clearer accountability, and restored user trust.