Unit 11: Secure AI Deployment & Model Robustness

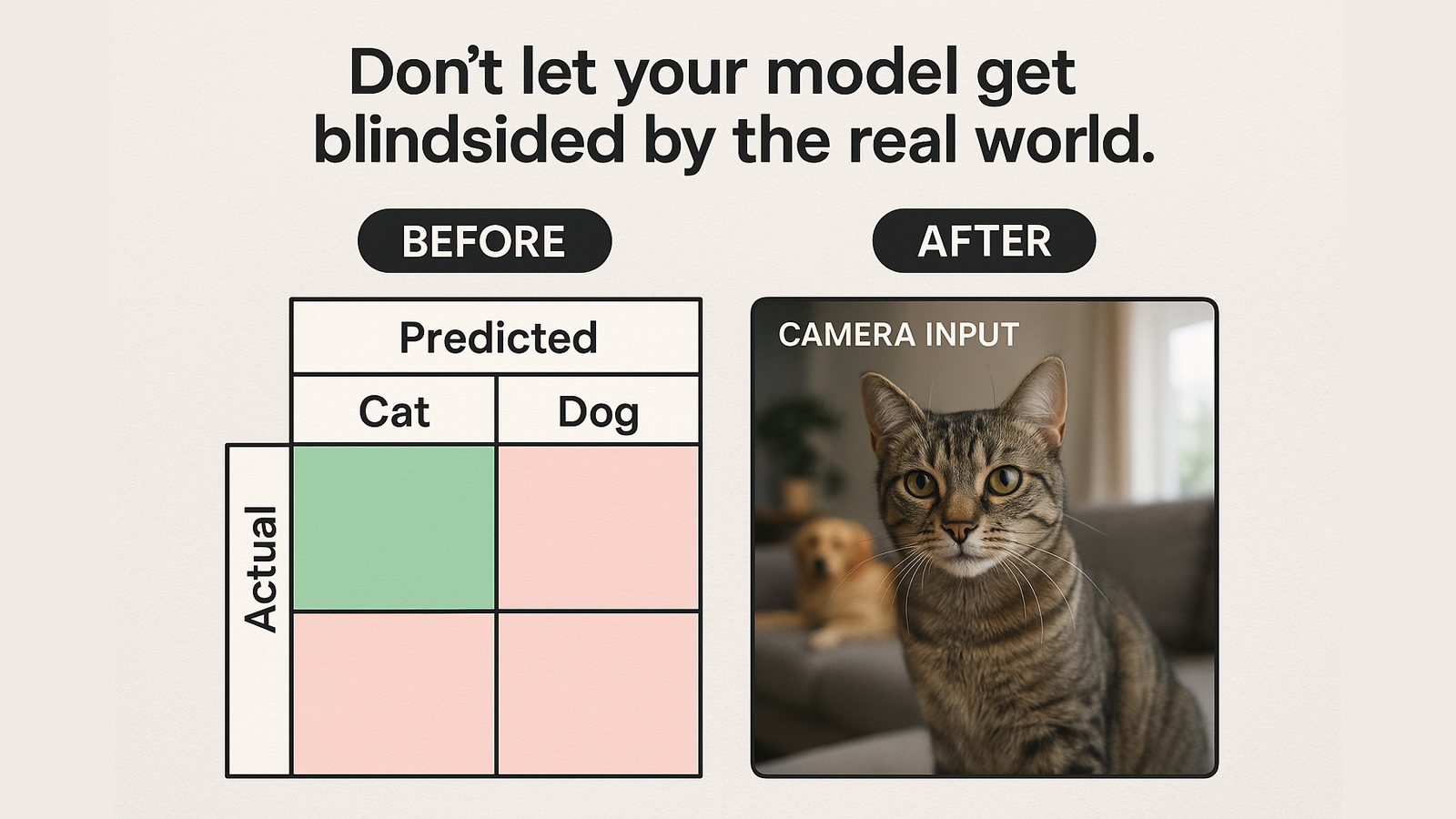

Scenario: A facial recognition AI at a major retailer produced wildly inaccurate results after a UI redesign on input cameras.

Root Cause:

Model drift + no post-deployment testing

Cameras fed grainier images, but the model wasn’t retrained

Mitigation:

Reintroduced regular input quality checks

Added model drift alerts

Re-trained with new data pipeline

📌 Trust was regained — after almost being lost.